About:

Exploring new approaches to machine hosted

neural-network simulation, and the science

behind them.

Your moderator:

John Repici

A programmer who is obsessed with giving experimenters

a better environment for developing biologically-guided

neural network designs. Author of

an introductory book on the subject titled:

"Netlab Loligo: New Approaches to Neural Network

Simulation". BOOK REVIEWERS ARE NEEDED!

Can you help?

Other Blogs/Sites:

Neural Networks

Hardware (Robotics, etc.)

|

Saturday, March 3. 2012

The site was switched to a new hosting service at the end of February. The blog and glossary were the pieces I was most anxious about, but they seem to have handled the move just fine.

So far, this host seems to be providing much faster responses. It should also provide better up-time.

Responses have gone from often taking 40-70 seconds, down to less than ten seconds. In fact, I haven't counted a single response greater than 12 yet.

The previous provider would regularly (about once a month) make changes that completely hid most, or all, of the site's content from the search-engines and in-links. Those down-times would typically last from two to six days. Many down-times, including the last one, only ended when I wrote some defensive code to work around their new server-settings.

Hoping this provider will do better in that department as well.

So far, I'm happy with it.

Thursday, January 19. 2012

Spent some time today doing minor edits to glossary entries. Of all the small edits, the most significant change made was to add the following section to the entry for weights.

“ . . . . . . .

Netlab's Compatibility Mode

ANN models that use floating point signed-value weights in the conventional fashion are math-centric. That is, they typically are concerned only with the signed numeric weight-value, rather than with the connection-strength represented by its absolute value. In this case, for example, increasing the weight value will make it more positive, regardless of whether it is representing an excitatory or inhibitory connection.

Netlab's default behavior is to operate directly on connection-strength representations, regardless of how they are implemented internally. Netlab neurons facilitate the conventional practice, however, by allowing it to be specified in the learning method for each weight-layer.

The table below shows how Netlab facilitates compatibility with existing practices. The table documents how the translation is carried out between the traditional math-centric convention, and Netlab's connection-strength-centric convention.

Connection-Type->

v--Operation

|

Excitatory

|

Inhibitory

|

|

Increase

|

Increase

Connection Strength

|

Decrease

Connection Strength

|

|

Decrease

|

Decrease

Connection Strength

|

Increase

Connection Strength

|

Translations performed when conventional adjustment

practice is specified for a connection.

”

One possible analogy for the conventional, value-based, adjustment practice is that of adjusting for a specific water temperature from a faucet. If the water is too cold, for example, adjusting the weight value is comparable to simultaneously increasing the hot and reducing the cold (hot being the negative inhibitory weights, and cold being the positive excitatory weights in this analogy). Conversely, if the water is too hot, it is adjusted by simultaneously decreasing the hot, and increasing the cold.

In this way, Netlab is able to fully support the practice of working directly with the numeric value of a signed weight, but it also supports its own alternative strategy of adjusting connection strength representations. This strategy seems to be more representative of what has been learned about the cell, and molecular biology of neurons. The faucet analogy used above to describe the value-based adjustment is not sufficient to describe this strategy [1].

- Related glossary entries:

===========

Notes:

[1] - This is not to say the connection-strength adjustment strategy can't be related with an analogy, just that I have been too lazy, or too unfocused to come up with one that feels satisfyingly apt.

Thursday, January 5. 2012

Linguists have recently discovered [1] that almost all words are metaphorical at their base, and some people (e.g., me) posit that they all are. Though speculative, it is at least conceivable that even the sub-language signaling in the brain, which eventually leads to language, is also metaphorical. Consider that the bell may become a metaphor for food in the mind of Pavlov's dog.

Language is also able to relate ambiguity about the concepts it conveys. The word “life,” for example, can mean life-biology, or life-consciousness. Up until now, it has been perfectly acceptable to use these two meanings interchangeably. There simply has never been an instance of consciousness that existed outside of a biological body — at least none that we could directly experience with our physical senses.

|

|

Things may be changing now. . .

[Read more...]

Friday, December 16. 2011

Merry Christmas, Happy Hanukkah, and happy new year. May your days be filled with happiness, love, and joy this Christmas season, and may your new year be a blessing to you and others.

-djr

Monday, November 14. 2011

The Glossary entry for William of Ockham here at the site has a new section titled “ In Other Words?”. This new section attempts to provide a nutshell explanation of William's original advice more accurately than the nutshell statement commonly used today. The advice in question is commonly referred to as Ockham's Razor. Here's the suggested new nutshell definition from the glossary entry.

"Always express things using the most general representation possible for the context in which the representation is being used."

The glossary entry goes on to clarify that this is just an attempted improvement over the current vague fashion statement, and it welcomes other suggestions.

-djr

Thursday, November 3. 2011

The book on the Netlab project often returns to the notion that learning is merely a form of adaptation and that, conversely, adaptation is merely a form of long-term learning. This, in turn, all fits under the umbrella notion that memory is behavior.

The idea that learning is adaptation is learning is forwarded as a possibility, mainly as a better means of discussing the concepts. This (in my opinion) provides a clearer and more converged understanding of how memory works in biological organisms. This could be very wrong, of course, so it's important to describe it properly. That way it, and not a straw man, can be critiqued. This article represents one such attempt to properly describe it. . .

Batesian mimicry is when a non-noxious/non-poisonous plant or animal projects the appearance of a poisonous plant or animal, allowing it to avoid being eaten by predators.

|

Those predators, goes the logic, which have partaken of the poisonous organism and survived, would have become very sick, and would have learned to avoid ingesting anything that appears to be that organism in the future. This will include those organisms who are not poisonous, but merely look, or act, like the poisonous organism.

|

Thursday, October 13. 2011

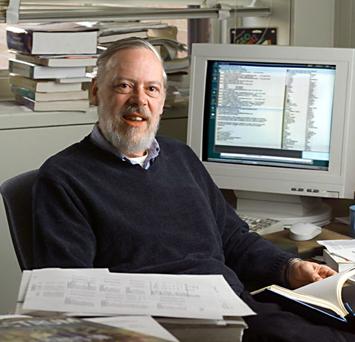

Dennis Ritchie, the creator of the C programming language, died on Saturday after battling a long illness. The C programming language, arguably, changed the world. It can be found at the heart of most modern computer applications, operating systems, and successor programming languages.

Dennis Ritchie

Creator of the C programming language

9 September 1941 — 8 October 2011

There's an obituary, and a very well researched history, at the Guardian

There's an obituary, and a very well researched history, at the Guardian

From his book, "The C Programming Language"

main()

{

printf("hello, world\n");

}

|

|

Stand Out Publishing

Stand Out Publishing